Model predictive control: real-time issues and RBF-ANN implementation

Tam metin

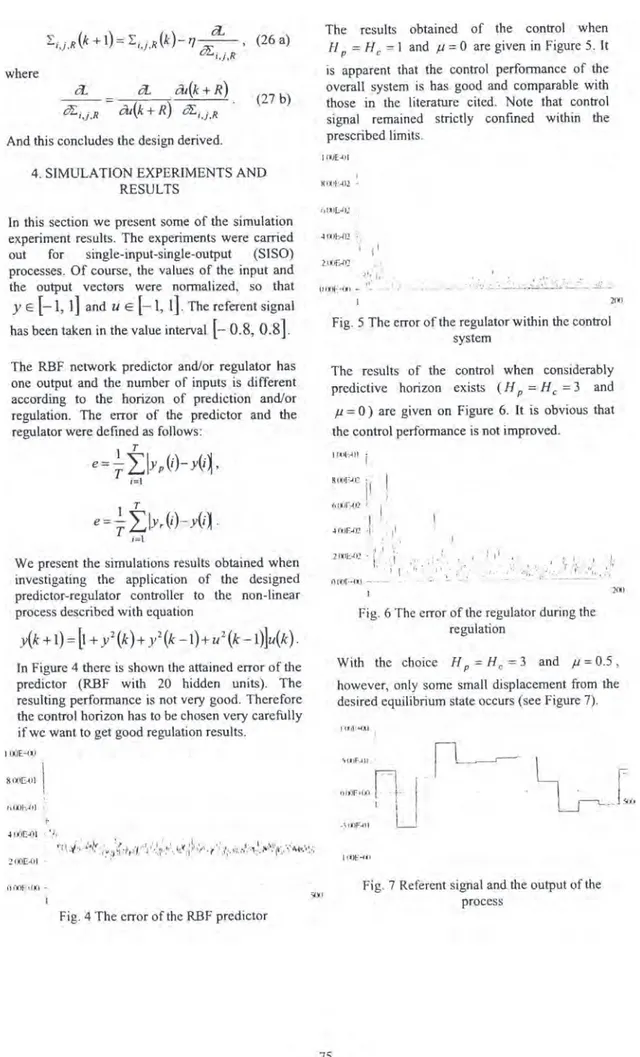

Şekil

Benzer Belgeler

The objective of our study was to determine the prevalence, awareness, treatment, and control rates in a population (aged 25 or older) from Derince dis- trict of Kocaeli county,

The turning range of the indicator to be selected must include the vertical region of the titration curve, not the horizontal region.. Thus, the color change

For this reason, there is a need for science and social science that will reveal the laws of how societies are organized and how minds are shaped.. Societies have gone through

Marketing channel; describes the groups of individuals and companies which are involved in directing the flow and sale of products and services from the provider to the

By sustaining the driving pressure for blood flow during ventricular relaxation, the arteries keep blood flowing continuously through the blood vessels... Systolic pressure –

Extensive property is the one that is dependent on the mass of the system such as volume, kinetic energy and potential energy.. Specific properties are

The acoustic signatures of the six different cross-ply orthotropic carbon fiber reinforced composites are investigated to characterize the progressive failure

If a transition of SF from high to low oc- curs in consecutive control packets indicating that the controller is not receiving actual plant states, but there is no controller