··

's

-«и ν·'5^·:.;..-: ..

^

%✓ -.,ν,ΜΤ

«,*-'

■»:

J

.■ ..

XON-INTERIOR PIECEWISE-LLXEAR PATHW AY'S

TO

SOLUTIONS OF OVERDETERMIXED

LINEAR SYSTEMS

A THESIS

SUBMITTED TO THE DEPARTMENT OF INDUSTRIAL

ENGINEERING

AND THE INSTITUTE OF ENGINEERING AND SCIENCE

OF BILKENT UNIVERSITY

IN PARTIAL FULFILLMENT OF THE REQlTRExMENTS

FOR THE DEGREE OF

MASTER OF SCIENCE

By

Samir Elhedhli

June, 1996

Jamie....

i// J

I certify that I have read this thesis and that in my opinion it is fully adeciuate.

in scope and in quality, as a thesis for the degree of .Master of Science.

Pınar

(,\d visor)

I certify that I have read this thesis and that in my opinion it is fully adequate,

in scope and in quality, as a thesis for th^e degree of Master of Science.

I certify that I have read this thesis and that in my opinion it is fully adequate,

in scope and in quality, as a thesis for the degree of Master of Science.

Assist. Prof. Tuğrul Dayar

.Approved for the Institute of Engineering and Sciences:

Prof. Mehmet Krttay

(9л

(i 4-6.4

ABSTRACT

X O N -IN T E R IO R P I E C E W IS E -L IX E A R P A T H W A Y S T O

(y,

S O L U T IO N S O F O V E R D E T E R M IX E D L I X E A R S Y S T E M S

Samir Elhedhli

M .S. in Industrial Engineering

Supervisor: Assist. Prof. M ustafa Ç . Pınar

June. 1996

In this thesis, a new characterization of

solutions to overdetermined sys

tems of linear equations is described based on a simple quadratic penalty func

tion, which is used to change the problem into an unconstrained one. Piecewise-

linear non-interior pathways to the set of optimal solutions are generated from

the minimization of the unconstrained function. It is shown that the entire

set of

solutions is obtained from the paths for sufficiently small values of

a scalar parameter. As a consequence, a new finite penalty algorithm is given

for fx, problems. The algorithm is implemented and exhaustively tested us

ing random and function approximation problems. .A comparison with the

Barrodale-Phillips algorithm is also done. The results indicate that the new

algorithm shows promising performance on random (non-function approxima

tion) problems.

Key words:

Optimization; Overdetermined Linear Systems, Quadratic

Penalty Functions, Characterization.

ÖZET

D O Ğ R U S A L ^oc P R O B L E M İ İÇ İN BİR P A R Ç A L I

D O Ğ R U S A L DIŞ N O K T A A L G O R İT M A S I

Saınir Elhedhli

Endüstri Mühendisliği Bölüm ü Yüksek Lisans

Tez Yöneticisi: Yrd. Doç. Mustafa Ç. Pınar

Haziran, 1996

Bu tez çalışmasında, doğrusal

problemi için yeni bir algoritma önerilmiştir.

Algoritma karesel bir ceza fonksiyonunun problemin doğrusal programlama

formülasyonuna uygulanması ile elde edilmiştir. Karesel ceza fonksiyonunun

çözüm kümesi parçalı doğrusal bir yol izleyerek esas problemin ( ) çözüm

kümesine ulaşır. Algoritmanın sonlu sayıda adımda optimal çözüme ulaştığı

gösterilmiştir. .Algoritma bilgisayarda programlanmış ve değişik problemler

üzerinde denenmiştir. .Ayrıca optimizasyon literatüründe en iyi bilinen Barrodale-

Phillips simplex algoritması ile karşılaştırılmıştır.

Anahtar sözcükler:

Problemi, Doğrusal Sistemler, Karesel Ceza Fonksiy

onu, Çözüm Kümesi Karakterizasyonu.

ACKNOWLEDGEMENT

I am indebted to Assist. Prof. Mustafa Ç. Pınar for his invaluable guidance,

encouragement and the enthusiasm which he inspired on me during this study.

I am also indebted to Assoc. Prof. Mustafa Akgül and Assist. Prof. Tuğrul

Dayar for showing keen interest on the subject matter and accepting to read

and review this thesis.

I would like to thank my office mates Alev Kaya and Murat Bayiz for their

patience and moral support.

I would like also to thank Abdullah Daşçı for his help during the preparation

of this thesis.

Finally, I would like to thank Fatma Gzara for everything.

Contents

1 Introduction

2

2 Literature Review

3

2 . 1

Historical Background ...

3

2 .2

The Algorithm of Barrodale and Phillips (1 9 7 -5 )...

4

2.3

The Algorithm of Bartels, Conn and Charalambous (1978) . . .

5

2.4

The Algorithm of Ruzinsky and Olsen (1989)

6

2.0

The .Algorithm of Coleman and Li (1 9 9 2 )...

S

2.6

Zhang’s -\lgorithm (1993)

9

3

A Quadratic Penalty Function Approach

11

3.1

Introduction...

1 1

3.2

Pathways To

Solutions

13

3.2.1

The Minimizers of F

...

14

3.2.2

Characterization of

S o lu t io n s ...

17

3.3

Extended Binary V ectors... 24

CONTENrS

vm

3.3

. 1

Behavior of the Set of Minitnizcrs near t he Feasible Bound

ary

2.5

3.4

The Penalty .Algorithm...

28

3.4.1

Computing an Unconstrained M in im ize!·...

>9

3.4.2

Reducing t ...

3

q

3.5

Finite C onvergence...

3

[

4 Example

34

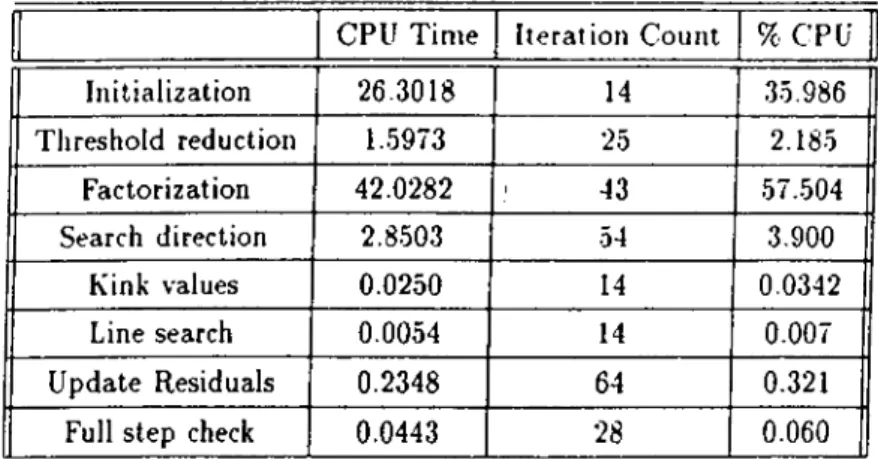

5 Numerical Issues and Implementation

38

5 .1

The Penalty A lgorithm ...

3 3

5.1.1

D e s crip tio n ...

3 3

5.1.2

Computing a minimizer of F (jr,y ,t)

39

5.1.3

Line S e a r c h ...

4 1

5.1.4

Reducing t ...

42

5.2

Implementation and Linear A lg e b ra ...

44

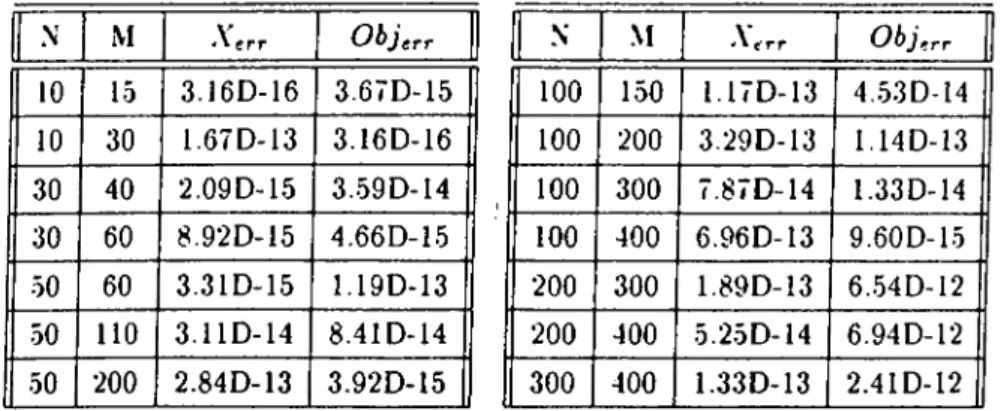

6

Numerical Testing

46

6.1

The Test P ro b le m s ... 46

6.2

Behavior of the algorithm ...

4

§

6.3

Initialization...

5 3

CONTENTS

7 Conclusion

IX

List of Figures

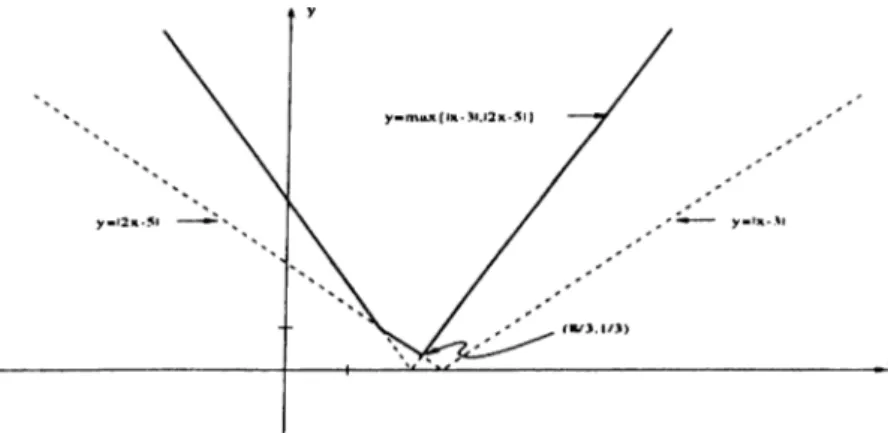

4.1

Plot of the function max(|r — 3|. |2jr — 5|)...

.34

4.2

The division of

according to the values of 0 i and

0 2

.

35

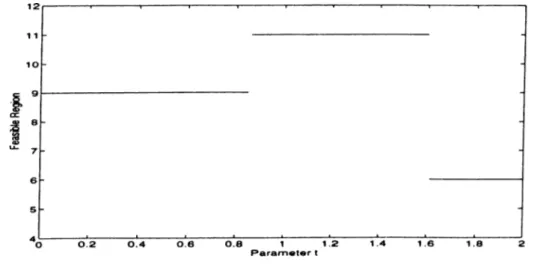

4.3

The region that contains the minimizer for different values of

t.

36

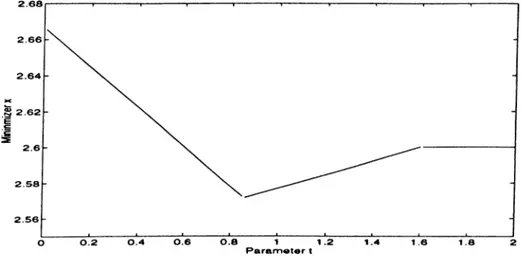

4.4

The minimizer x as a function of t ...

37

4.5

The minimizer

y as, s.

function of t ...

37

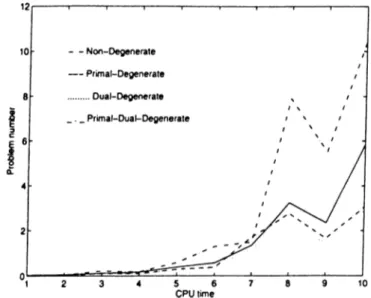

6.1

CPU time on nondegenerate, primal, dual and primal-dual de

generate problems...

51

6.2

Comparison of the different initialization schemes of to...

63

6.3

CPU time comparison between LINFSOL and B-P for nonde

generate problems... 63

6.4

CPU time comparison between LINFSOL and B-P for different

ratios of m to n on nondegenerate problems.

64

6.5

CPU time comparison for primal degenerate problems...64

6.6

CPU time ratio versus m to n ratio for primal degenerate prob

lems... 65

6.7

CPU time comparison for dual degenerate problems.

65

LIST OF FIGURES

XI

6.8

CPU time ratio versus m to

n

ratio for dual degenerate problems.

66

6.9 CPU time comparison for primal-dual degenerate problems.

66

6 .1 0

CPI time ratio versus

m

to

n

ratio for primal-dual degenerate

problems...

g j

Xl l

Glossary of Symbols and Notations

X

y

(■■»·, i/)

cL

a,

T

e

^ n + l

0

F{ z , t )

b

/1

0

Q

p

P

vector of length n.

scalar (€ -R).

pair of vector

x

and scalar

y.

(n

+ l)-vector with

Z{

=

X{

for i =

1

, ..,n and

=

y.

(n

+ l)-vector with d,; =

for

i =

and

=

dy.

j-th column of matrix

A.

¿-th row of matrix

A.

vector of all I ’s of appropriate length, i.e, e ^ = ( l ,

1

)^.

(n + l)-vector with

Ci = 0

for

i =

1

,

..,n

and e„+i =

1

.

vector or matrix of zeroes of appropriate length.

=

F { x , y , t ) = Ft{x,y).

277

r-vector of the form ^ , where

b

G R '".

(2m)

X

(n + l)-m atrix of the form

- A - e

A - e

01

_0

0

02

where

0

i and

0 2

are

(2m

X

2m) matrix of the form

771

X

m matrices.

(2m)

X

(n + l)-m atrix which is equivalent to 0 A .

(?2 +

1)

X (?2 +

l)-m atrix which is equivalent to

Q ^ Q .

Chapter 1

Introduction

The

problem has many applications in a wide range of fields, and for

that reason, fast, accurate and stable methods to solve it were all the time

sought. The first attempts seem to have been made by statisticians cis the

problem arises frequently in data fitting analysis. More efficient ways, however,

were designed when it was realized that the problem is equivalent to a linear

program, and , hence it can be solved via any linear programming method.

Since then, the solution approaches w'ere following developments in linear

programming. Barrodale and Phillips [

1

] designed a simplex-like method in

which the special structure of the coefficient matrix is exploited. .After Kar-

rnarkar's outstanding paper which opened the area of interior-point methods.

Ruzinsky and Olsen [14] used the same ideas to design a polynomial algorithm

for the

problem. Later, large developments in the interior-point area led to

the solution method of Zhang [16], where an affine scaling approach is used.

.Again, in this thesis, the linear programming formulation of the

problem

is used. The constraints are coupled with the objective function through the

use of a simple quadratic penalty function and a smoothing parameter. In

theory, a .solution to the original problem could be obtained from a solution

to the unconstrained problem w'hen the parameter tends to zero. It is shown,

however, that it is not necessary to let the parameter converge to zero and that

C H A P T E R 1. INTRODUCTION

there is a threshold value, for which a solution could be detected. This remark

is essential both for the efficiency and the numerical stability of the designed

algorithm. The approach could be termed as an

exterior point

approach, as

a .seci’uence of non-interior iterates is generated that satisfies primal feasibility

only upon termination.

The organization of the thesis is as follows. In the ne.xt chapter a summary

of the most known algorithms for the

tx,

problem is given. Then a new char

acterization of the solution set is done in the third chapter, followed with a

numerical example in the fourth chapter. Chapter five is devoted to the anal

ysis and design of the algorithm. Numerical testing and a comparison with

the Barrodale-Phillips algorithm is provided in the fifth chapter. The thesis

concludes with some remarks and suggestions for future research.

Chapter 2

Literature Review

2.1

Historical Background

The

approximation problem is to find or € /2” that minimizes

||

j

4

i

— 6 ||oo = rnax

\afx

— 6 ,|

1 = 1 ..m

where

A

€

with columns

aj

and rows

a j

and

b .y ^

/2'” .

The problem is also known as

minimax

or the

Chebychev

approximation prob

lem, and is believed to have been first posed by the French mathematician

Laplace

in 1799 in a study of inconsistent linear systems . However the Rus

sian mathematician

Chebychev

seems to be the first to have studied deeply

such class of problems in the 1850’s [15].

One of the first systematic methods to solve the

problem was due to Stiefel

(1959), who points out that for a subset J of n -h

1

indices ( among the

2 m

),

either

min max

\ajx

—

6

,| < min max

\ajx

—

6

,|

j-eft"

i e J

x e / ? " i = i . . m ' ‘

'

or, an other subset

J*

of indices can be formed from

J

by interchanging one

index, so that

min max

\ajx —

6

,| < min max jafx —

6

,|.

CU.\rTI-R 2. LITERArURE REVIEW

riie method which he called the

Exchange Method

[13] is similar in principal

to the simplex method, as n +

1

equations among the

2m .

1

''^ “ 'J j^^'j =

are

solved for at a time. Then either max.^j |/·,(J·)| < max

,¿7

|/·,(.r)| and optimality

is reached, or an exchange is made. The method requires that at each step the

matrix picked from

>s of full rank.

■\mong the first to notice that the

problem could be formulated as a linear

program was Zuhovitzki in the 1950’s [15], who used the primal formulation.

min y

[LIA F L P ]

s.t

Ax — ye < b

Ax A ye > b

to design an algorithm. Kelley (1958), however was the first to use the dual

formulation

max

b^(v — u)

s.t

A'^(

v

—

u

) =

0

e^(u

+ i’) =

1

u,

V

>

0

which decreases the size of the problem and puts it directly into a standard

form. In the late 1960’s versions of the simplex method were designed for the

problem, the first of which is due to Barrodale and Young (1966) and Bartels

and Golub (1968) [15].

[ L IN F L D ]

2.2

The Algorithm of Barrodale and Phillips

(1975)

Barrodale and Phillips [1] use a modified simplex algorithm that exploits the

special structure of

[ L IN F L D ] .

Like the simplex algorithm, their algorithm

moves from one vertex to a neighboring one that provides a decrease in the

objective function. The structure of the problem, however, makes the exchange

of u, and

Vi

easy to accomplish. Consider the constraint matrix

, the

columns corresponding to u, and i>, are ["j*'] and

respectively, and suppose

that the basis contains the variables ( x i , ...,

u·} and the ( n +

1

) x (n +

1

)-niatrix

B

= [ w i,..., w „, w„+i] where w 's

j =

L ..,n are columns of length

n

+

1

and w„+| =

and that r, will take the place of «, in the basis, then

instead of solving

n

r

-«i

ni A P T E R 2. LITERATURE REVIEW

r>

we solve

In other words.

is solved instead of

L

+

;=i

7 =

1

B x = —Vi

» , =

0

,

c, =

0

.

B x = Ui

where

B

= [ w i , w „ ] , and thus if

B~^

exists, then it is easy to solve for the

second system.

In the first phase of the algorithm, artificial variables are introduced and are

taken in the basis, then only variables « ,'s are allowed to enter the basis in

place of the artificial variables. At the end of the first phase, a basic feasible

solution is found where n + 1 constraints hold with equality. At that point,

the ordinary simplex algorithm is applied until optimality is reached.

2.3

The Algorithm of Bartels, Conn and Char-

alambous (1978)

This algorithm [2] is based upon solving the linear programming formulation

[LINFLP] through the use of an exact penalty function. Let [LINFLP] be

written as,

min

C

q

W

CIIAFTEIi 2. LITERATURE REVIEW

whcro.

Co

c. =

0,

1

- a .

t

^rn + i

---

.

/ -

1

... m.

^11

0m+i

— ^

1

·

ZO =

For a fixed parameter /z > 0, they define an unconstrained function,

2m

p{w, ¡/)

=

i

'C

q

W —

^ min(0,

c j w

—

3j),

j=i

(2.1)

and prove that for decreasing values of t/, a solution to [LINFLP] can be got

from a minimizer of

2 . 1

when

u

tends to zero.

The minimization of 2.1 is done through the choice of a descent direction

(null space projected gradient) and the computation of a step size (line search).

If a minimizer of 2.1 is detected for which primal feasibility is satisfied then

that solution is optimal. Otheriwse, if any of the constraints are violated then

1

/

is reduced and the function

p

is again minimized starting from the previous

point. Obviously, the algorithm needs a good starting point, and that was

mainly the subject of [-3].

2.4

The Algorithm of Ruzinsky and Olsen (1989)

This algorithm is a variant of Karmarkar’s algorithm applied to the dual linear

programming formulation [LINFLD], which evolves through the interior o f the

c u . \ P T K R 2 .

UTER.VrURE REVIEW

feasible region. It is mainly a rescaling technique coupled with a projected

gradient method. Putting [LINFLD] in a standard form, yields.

where.

A =

m in

c^x

s .t

Ax = b

X > 0 ,

.4 ^

- . 4 ^ '

r

;

i

=

1

^

—

0

1

c =

- b

b

X

=

u

V

The initial point is chosen as

wq

= Co —

*

A:th iteration,

Kar-niarkar would rescale the problem so that e is the center of the feasible region,

solve a weighted least squares problem to find a search direction, compute a

step size and update the primal variable until the duality gap is closed. This is

exactly what Ruzinsky and Olsen [14] did, the only difference is in the stopping

criteria. The algorithm is the following.

• In itialize:

u

v —

some small positive number.

• Itera te:

-

f = [u

1

-

i f ] ,

- r»:=diag(u ? + y2)^

d =

“ '^•=[[/^.4] [0 °j [/rj)"'[(/rj [o<?][/k]]’

-

r:=b — Ax,

- if

1

+

<

e

then

stop.

- else

* ¿1 := [nf(—

— r,)j; 62:=[vf(—clf^r + ri)], {Search direction}

* ^

wa.\(max,(-St,/u,),max,(S2,/v,) ’

(Step size)

* u : = u + Jb i; v .—v A ^$2,

(Update)

• Until

stop.

(Rescaling matrix)

(Least squares)

(Residual vector)

(Stopping criteria)

CUMn'ER 2. LITERATI RE REVIEW

2.5

The Algorithm of Coleman and Li (1992)

C o l e m a n a n Li [

4

] u s e a f o r m u l a t i o n o f t h e

p r o b l e m t h a t is b a s e d u p o n t h e

n u ll s p a c e o f .

4

. P r e c i s e l y , t h e y c o n s i d e r t h e ( m

— n)

x m m a t r i x

Z

w it h r a n k

(ni — n)

s u c h t h a t

AZ

= 0 t o f o r m u l a t e t h e p r o b l e m a s:

m in ||r||.:.; =

<t>{r)

Zr = Zb

m m

y

Zr = Zb

- y e

< r < ye

a s r = .

4

j

· —

b

le a d s t o

Zr = ZAx — Zb

a n d

Zr = Zb.

T h e f u n c t i o n

4

>{r)

is

n o n - d i f f e r e n t i a b l e a t t h e p o i n t s r w h e r e m o r e t h a n o n e r e s id u a l h a s m a x i m u m

m a g n i t u d e .

A s s u m e t h a t t h is is n o t t h e c a s e , a n d t h e r e is o n l y o n e i n d e x

j

f o r w h ic h |rj| = ||r||co; t h e n

6

{r)

is d i f f e r e n t ia b l e in t h e n e i g h b o r h o o d o f

t h e c u r r e n t p o i n t

r

a n d t h e n e a r - b y n o n - d i f f e r e n t i a b l e r e g io n is d e fin e d b y

lo l =

1

^·! f®*·

^ ^ j

-B y d e fi n in g ,

Ci=Sjrj-Siri i ^ j

a n d

= |r_,|e - | r|-|-Ir^lcj = T - V ,

w h e r e s = s i g n ( r ) a n d T is a s i m p l e e l e m e n t a r y m a t r i x d e f i n e d b y :

T

— [

S i e j , . , ,

Sj _ i C j—1. s ,

T h e n p r o b l e m b e c o m e s .

m in IIT cIloo

ZTc = Zb.

T h e a l g o r i t h m t h e n p r o c e e d s w i t h f in d in g a d e s c e n t d i r e c t i o n

d

u s in g a w e ll

c h o s e n c r i t e r i a s o t h a t b o t h g l o b a l c o n v e r g e n c e a n d t h e a b i l i t y t o c r o s s lin e s o f

n o n - d i f f e r e n t i a b i l i t y a r e e n h a n c e d ,

d =

-A{A^T-'^D-^T-\A)-^A'^9,

(

7/.

\PTEll 2. LITERATURE REVIEW

w h e r e

g = SjCj.

T h e c h o i c e o f

D

is d o n e in a w a y s o t h a t n e a r t h e s o lu t i o n

u n it X e w t o n s t e p s a r e t a k e n , a s

w i t h C ’ = d i a g ( c ) a n d

is a v a r i a b le t h a t e n c a p s u l a t e s t h e o p t i m a l i t y c o n d i t i o n s

a n d t e n d s t o z e r o a s t h e s o l u t i o n is r e a c h e d .

T h e n e x t s t e p is t o p e r f o r m a lin e s e a r c h a lo n g

d.

In o t h e r w o r d s . o ( r + a d )

is m i n i m i z e d w i t h r e s p e c t t o a . T h i s is d o n e b y c o n s i d e r i n g e a c h b r e a k p o in t

in t u r n , a d j u s t i n g t h e g r a d i e n t t o r e f le c t

a

s t e p ju s t b e y o n d t h e b r e a k p o i n t a n d

c h e c k i n g i f

d

c o n t i n u e s t o b e a d e s c e n t d i r e c t i o n . F i n a l ly

r

a n d A , ( t h e d u a l

v a r i a b l e ) a r e u p d a t e d a s,

r"*·

= r + ad

a n d

A"*· = ^ +

T~^D~^T~^d.

In b r i e f , t h e a l g o r i t h m s t a r t s b y a fe a s ib le p o in t

tq

s u c h t h a t

Zro

=

Zb,

m a k e s a r e s c a l in g t r a n s f o r m a t i o n , c o m p u t e s a s t e p s iz e u s in g a lin e s e a r c h a n d

p r o c e e d s i t e r a t i v e l y u n t il r e a c h i n g o p t i m a l i t y . T h e m a in w o r k in t h e a lg o r it h m

is d o n e in t h e s o l u t i o n o f t h e le a s t s q u a r e s p r o b l e m s .

2.6

Zhang’s Algorithm (1993)

■As t h e

7

.x, p r o b l e m is e q u i v a l e n t t o a lin e a r p r o g r a m . Z h a n g [

16

] u.ses a p r im a l-

d u a l i n t e r i o r p o i n t m e t h o d t o s o lv e f o r [ L I N F L P ] a n d [ L I N F L D j. T h e K a r u s h -

K u h n - T u c k e r o p t i m a l i t y c o n d i t i o n s c a n b e w r it t e n eis:

A^{u — v)

e^(u + u) —

1

F{p,q,u,v,i,y) =

pA Ax — yt — b

q

—

Ax — ye + b

Up

Uq

= 0 ,w h e r e

U

= d i a g ( u ) a n d

V

= d i a g ( u ) a n d

(p,q,u,v) >

0

.

CllAPTKR 2. LITERATURE REVIEW

1 0