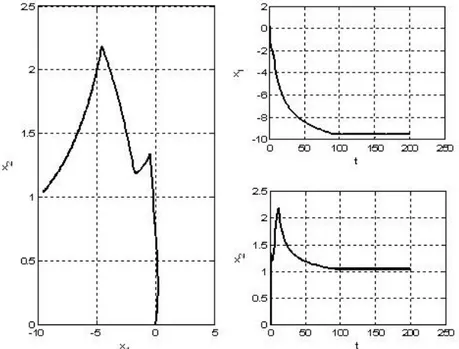

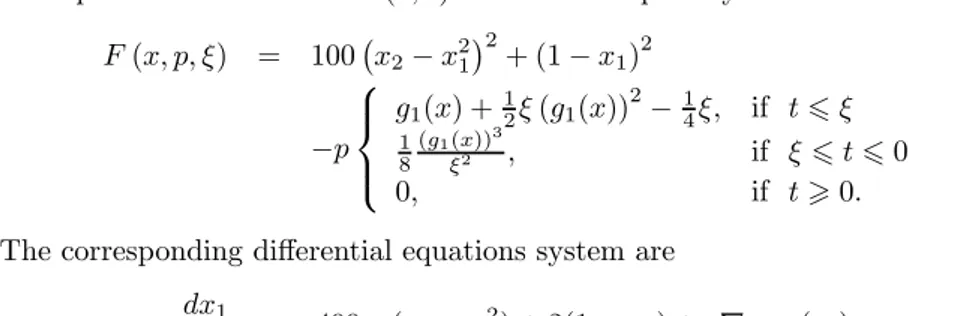

Solving NLP problems with dynamic system approach based on smoothed penalty function

Tam metin

Şekil

Benzer Belgeler

To test the main hypothesis that volatility risk—proxied by zero-beta at-the-money straddle returns—is priced in securities returns, we first regress the excess returns of size

domain providing the result of the propose4 algorithm. Since in the new method, the synthesis is performed in the warped FrFT domain, there should be an easy way

This study presented a simulation based optimization approach based on the integration of EnergyPlus building performance simulation program with GenOpt optimiza-

In order to improve individual development and social acceptance, it is necessary for programs that support important competence skills such as analyzing the problems

This questionnaire allows diagnosing the life goals of the individual and determining the intensity of the eight basic terminal values (own prestige, high

Mehmet Ali Ayni, Kilisli Rıfat Bilge, Fuad Köprülü, İsmail Hakkı Uzunçarşılı, İsmail Hami Danişmend, Ahmed Süheyl Ünver, Muallim Cevdet, İbnülemin Mahmud Emin

Although DEP force is tunable by means of other parameters like molarity of the suspending medium and the electrical field, the tunable range of these para- meters is restricted due

We here describe an example of enhanced protection of dithiothreitol (DTT) capped gold nanoclusters (DTT.AuNC) encap- sulated porous cellulose acetate fibers (pCAF) and their Cu 2+